Protect AI Blog

Red Teaming

August 15, 2025

Automated Red Teaming Scans of Dataiku Agents Using Protect AI Recon

7 minute read

Read more

Red Teaming

August 8, 2025

Strengthening AI Security with Protect AI Recon & Dataiku Guard Services

3 minute read

Read more

Red Teaming

July 16, 2025

Llama 4 Series Vulnerability Assessment: Scout vs. Maverick

10 minute read

Read more

Adversarial ML

June 23, 2025

AI Risk Report: Fast-Growing Threats in AI Runtime

3 minute read

Read more

GenAI

June 17, 2025

The Cost of Being Wordy: Detecting Resource-Draining Prompts

16 minute read

Read more

Cybersecurity

June 12, 2025

Security Spotlight: AppSec to AI, a Security Engineer's Journey

7 minute read

Read more

Adversarial ML

June 4, 2025

Balancing Velocity and Vulnerability with llamafile

5 minute read

Read more

Secure by Design

May 28, 2025

Security Spotlight: Securing Cloud & AI Products with Guardrails

17 minute read

Read more

Red Teaming

May 21, 2025

Assessing the Security of 4 Popular AI Reasoning Models

11 minute read

Read more

LLM Security

May 13, 2025

Specialized Models Beat Single LLMs for AI Security

7 minute read

Read more

Red Teaming

May 7, 2025

GPT-4.1 Assessment: Critical Vulnerabilities Exposed

12 minute read

Read more

Model Security

April 23, 2025

Introducing Guardian Local Scanning: Streamlined Model Security

4 minute read

Read more

Model Security

April 23, 2025

Implementing Advanced Model Security for Custom Model Import in Amazon Bedrock

30 minute read

Read more

Red Teaming

April 23, 2025

Building Robust LLM Guardrails for DeepSeek-R1 in Amazon Bedrock

35 minute read

Read more

Secure by Design

April 22, 2025

Secure by Design for AI: A Real-World Healthcare Case Study

9 minute read

Read more

Secure by Design

April 16, 2025

Tools and Technologies for Secure by Design AI Systems

10 minute read

Read more

Machine Learning

April 16, 2025

Machine Learning Models: A New Attack Vector for an Old Exploit

6 minute read

Read more

Model Security

April 14, 2025

4M Models Scanned: Hugging Face + Protect AI Partnership Update

9 minute read

Read more

Cybersecurity

April 11, 2025

Security Spotlight: Embracing a Culture of Security at Protect AI

6 minute read

Read more

LLM Security

April 8, 2025

MCP Security 101: A New Protocol for Agentic AI

9 minute read

Read more

Secure by Design

April 3, 2025

Securing Agentic AI: Where MLSecOps Meets DevSecOps

12 minute read

Read more

Red Teaming

April 2, 2025

Qwen2.5-Max Vulnerability Assessment

15 minute read

Read more

Artificial Intelligence

March 27, 2025

The Expanding Role of Red Teaming in Defending AI Systems

5 minute read

Read more

Adversarial ML

March 27, 2025

A CISO’s Guide to Securing AI Models

5 minute read

Read more

LLM Security

March 27, 2025

A Step-by-Step Guide to Securing LLM Applications

6 minute read

Read more

Secure by Design

March 26, 2025

Building Secure by Design AI Systems: A Defense in Depth

9 minute read

Read more

Secure by Design

March 26, 2025

The Evolution of AI Security: Why Secure by Design Matters

6 minute read

Read more

Red Teaming

February 12, 2025

Automated Red Teaming Scans of Databricks Mosaic AI Model Serving Endpoints Using Protect AI Recon

10 minute read

Read more

LLM Security

February 10, 2025

Breaking Down LLM Security: 3 Key Risks

6 minute read

Read more

Secure by Design

February 7, 2025

Secure by Design: Why Protect AI Signed CISA's Pledge

7 minute read

Read more

Model Security

January 28, 2025

Using Protect AI's Products to Analyze DeepSeek-R1

9 minute read

Read more

LLM Security

January 28, 2025

Why eBPF is Secure: A Look at the Future Technology in LLM Security

6 minute read

Read more

MLSecOps

January 8, 2025

MLSecOps: The Foundation of AI/ML Security

4 minute read

Read more

MLSecOps

December 11, 2024

How To Secure AI With MLSecOps

5 minute read

Read more

LLM Security

December 6, 2024

Layer’s agentless approach to securing enterprise LLM applications

3 minute read

Read more

LLM Security

December 4, 2024

How Protect AI is shaping the future of LLM Security at runtime with eBPF

4 minute read

Read more

Red Teaming

November 25, 2024

Why Automated Red Teaming is Essential for GenAI Security

11 minute read

Read more

Model Security

October 25, 2024

Supporting the safe and secure usage of the world's largest AI/ML Model Repository

5 minute read

Read more

AI ZeroDay

October 23, 2024

4 Ways to Address Zero-Days in AI/ML Security

5 minute read

Read more

LLM Security

October 8, 2024

Out of Line Threat Scanning for LLMs: Some Real-World Examples

6 minute read

Read more

LLM Security

September 27, 2024

RAG Security 101

9 minute read

Read more

LLM Security

August 28, 2024

Why LLMs Are Just the Tip of the AI Security Iceberg

6 minute read

Read more

LLM Security

July 24, 2024

LLM Security: Going Beyond Firewalls

10 minute read

Read more

Red Teaming

July 3, 2024

The Crucial Role of the AI Red Team in Modern Cybersecurity

6 minute read

Read more

Threat Intelligence

June 20, 2024

Navigating Vulnerabilities in the AI Supply Chain

6 minute read

Read more

Model Security

June 10, 2024

The Trojan Horses Haunting Your AI Models

4 minute read

Read more

LLM Security

May 30, 2024

AI Agents: Chapter 3 - Practical Approaches to AI Agents Security

7 minute read

Read more

Industry News

May 24, 2024

The role of cybersecurity in AI system development

4 minute read

Read more

Industry News

May 23, 2024

Does Your Company Need A Chief AI Officer?

6 minute read

Read more

LLM Security

April 24, 2024

AI Agents: Chapter 2 - The Thin Line between AI Agents and Rogue Agents

10 minute read

Read more

LLM Security

April 24, 2024

NEW to LLM Guard - Next Gen v2 Prompt Injection Model

8 minute read

Read more

LLM Security

April 3, 2024

AI Agents: Chapter 1 - (Ground)breaking LLMs?

5 minute read

Read more

LLM Security

March 13, 2024

Hiding in Plain Sight: The Challenge of Prompt Injections in a Multi-Modal World

4 minute read

Read more

LLM Security

March 5, 2024

Preventing LLM Meltdowns with LLM Guard

5 minute read

Read more

MLSecOps

March 5, 2024

How MLSecOps Can Reshape AI Security

8 minute read

Read more

LLM Security

February 21, 2024

Advancing LLM Adoption and Enhancing Security Against Invisible Prompt Injections with LLM Guard

5 minute read

Read more

Model Security

January 23, 2024

How To Use AI/ML Technology Securely with Open-Source Tools from Protect AI

12 minute read

Read more

Adversarial ML

January 16, 2024

A CISO’s perspective on how to understand and address AI risk

6 minute read

Read more

Adversarial ML

January 10, 2024

Adapting Security to Protect AI/ML Systems

7 minute read

Read more

PAI Updates

December 15, 2023

Protect AI Named on the Fortune Cyber60 List

2 minute read

Read more

PAI Updates

December 12, 2023

Protect AI CEO, Ian Swanson, Delivers Testimony In Congressional Hearing on AI Security

7 minute read

Read more

PAI Updates

August 3, 2023

Announcing ModelScan: Open Source Protection Against Model Serialization Attacks

11 minute read

Read more

PAI Updates

July 26, 2023

The Time is Now to Protect AI

4 minute read

Read more

Industry News

June 15, 2023

Alphabet Spells Out AI Security

5 minute read

Read more

Threat Intelligence

June 6, 2023

Secure Your Python Projects with Dummies

7 minute read

Read more

Threat Intelligence

June 5, 2023

Hacking AI: System Takeover in MLflow Strikes Again (And Again)

13 minute read

Read more

Industry News

May 25, 2023

What’s Old is New - Natural Language as the Hacking Tool of Choice

5 minute read

Read more

Industry News

May 16, 2023

A Tale of Two LLMs - Safety vs. Complexity

4 minute read

Read more

Industry News

May 8, 2023

Blog Byte: Spherical Steaks in ML. “Say what?!”

3 minute read

Read more

Employee Spotlight

March 31, 2023

Employee Spotlight: Josh Miles

3 minute read

Read more

Employee Spotlight

March 30, 2023

Employee Spotlight: Dan McInerney

2 minute read

Read more

Employee Spotlight

March 30, 2023

Employee Spotlight: Faisal Khan

2 minute read

Read more

MLSecOps

March 13, 2023

Hacking AI: System and Cloud Takeover via MLflow Exploit

23 minute read

Read more

Threat Intelligence

March 7, 2023

AI Zero Day Found in MLflow

9 minute read

Read more

Threat Intelligence

March 6, 2023

Hacking AI: Steal Models from MLflow, No Exploit Needed

10 minute read

Read more

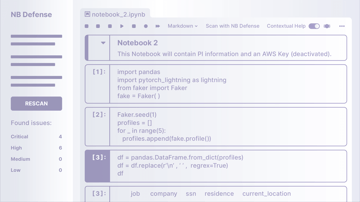

PAI Updates

February 27, 2023

NB Defense Now in Public Beta

11 minute read

Read more

PAI Updates

November 16, 2022

Why We Are Building Protect AI

3 minute read

Read more

PAI Updates

November 16, 2022

Announcing NB Defense: The Starting Point of ML Security

18 minute read

Read more

MLSecOps

October 21, 2022

AI Zero Days: Why we need MLSecOps, now.

8 minute read

Read more Find a topic you care about

Get the best of Protect AI delivered straight to your inbox

Subscribe to our newsletter for the latest AI news.