Guardian

Guardian

Defend against unseen threats to innovate securely using any AI model.

AI Model Security with Zero Compromises

Accelerated, Secure AI Innovation

Quickly and confidently adopt AI models from diverse sources without compromising on security. Guardian’s flexible, granular policies and native Hugging Face integration make it possible to safely leverage open source options and stay at the forefront of AI innovation.

Cutting-Edge Scanners

Guardian offers the widest and deepest set of model scanners on the market—identifying deserialization, architectural backdoors, and runtime threats across all major model formats. Powered by threat research from over 17,000 security researchers, Guardian evolves faster than emerging AI threats.

Effortless Integration

Built by AI pioneers, Guardian integrates seamlessly into existing AI pipelines, DevOps workflows, repositories, and research environments, no matter the complexity. Protect your most sensitive intellectual property with distributed, on-premises, and local scanning.

Key Features

Best-in-Class Model Scanners

Guardian scans 35+ different model formats (including PyTorch, TensorFlow, ONNX, Keras, Pickle, GGUF, Safetensors, and LLM-specific formats), detecting deserialization attacks, architectural backdoors, and runtime threats. Guardian stays ahead of new vulnerabilities by leveraging huntr, our security research community, and by continuously scanning the entirety of Hugging Face.

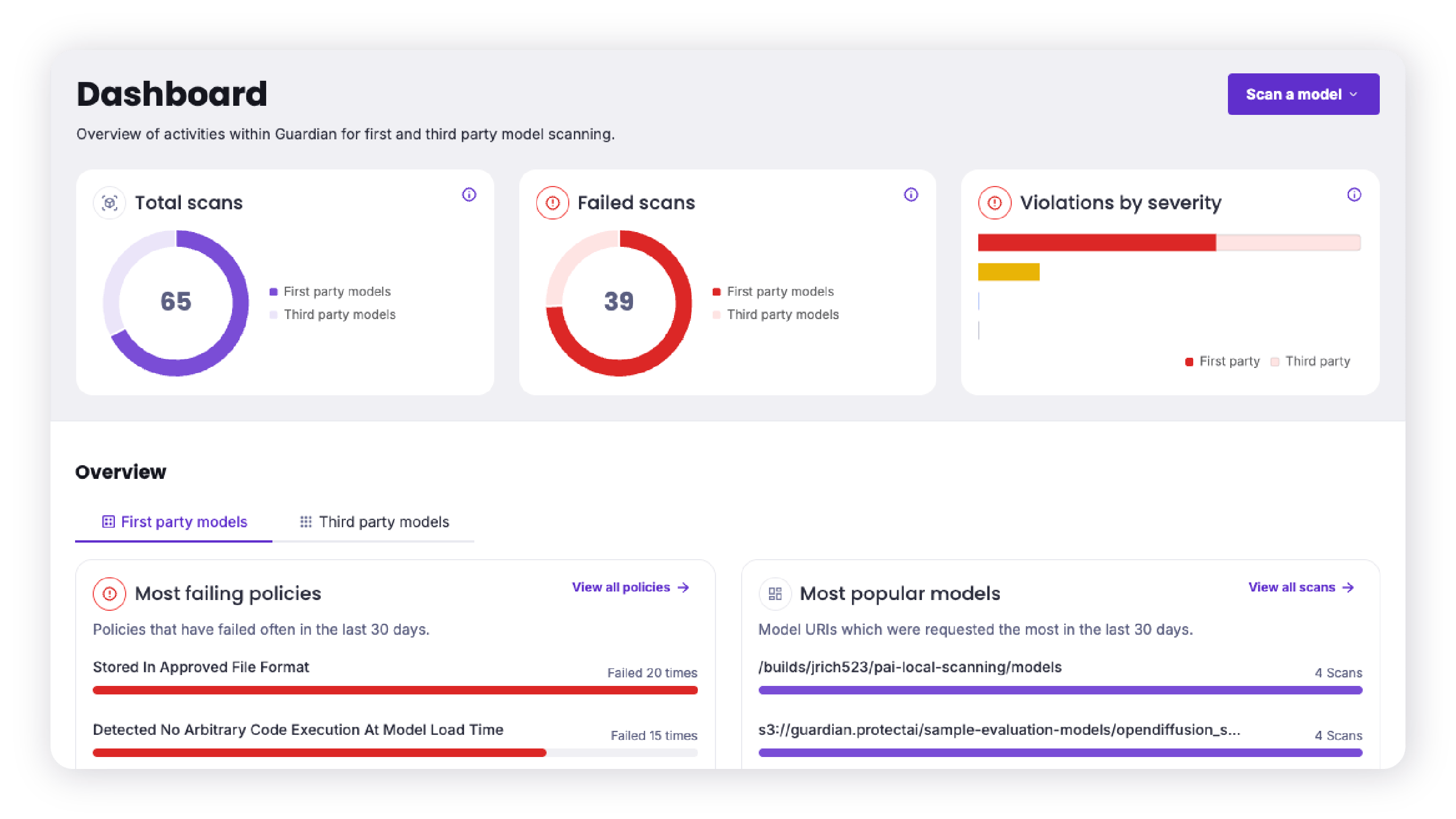

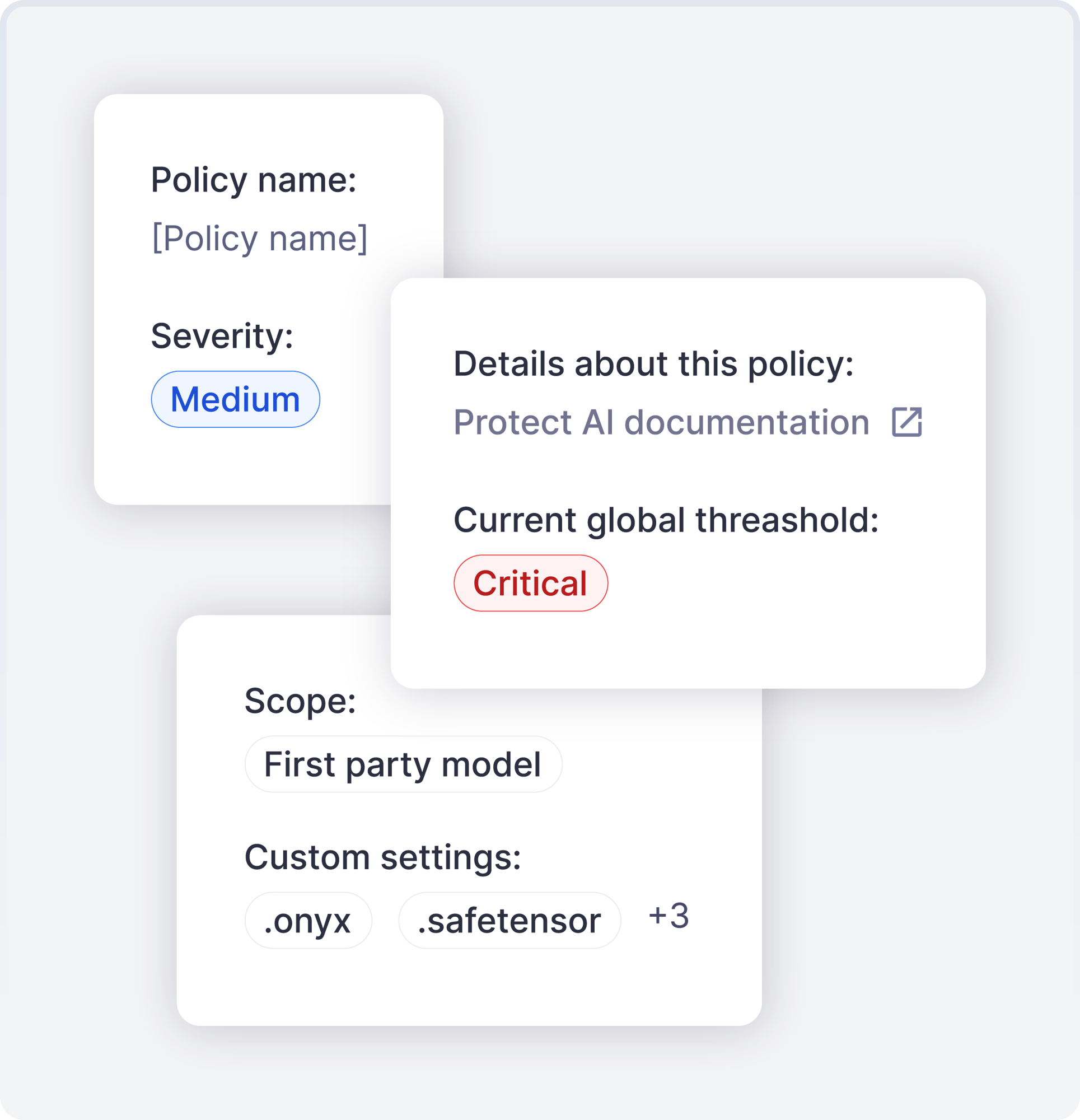

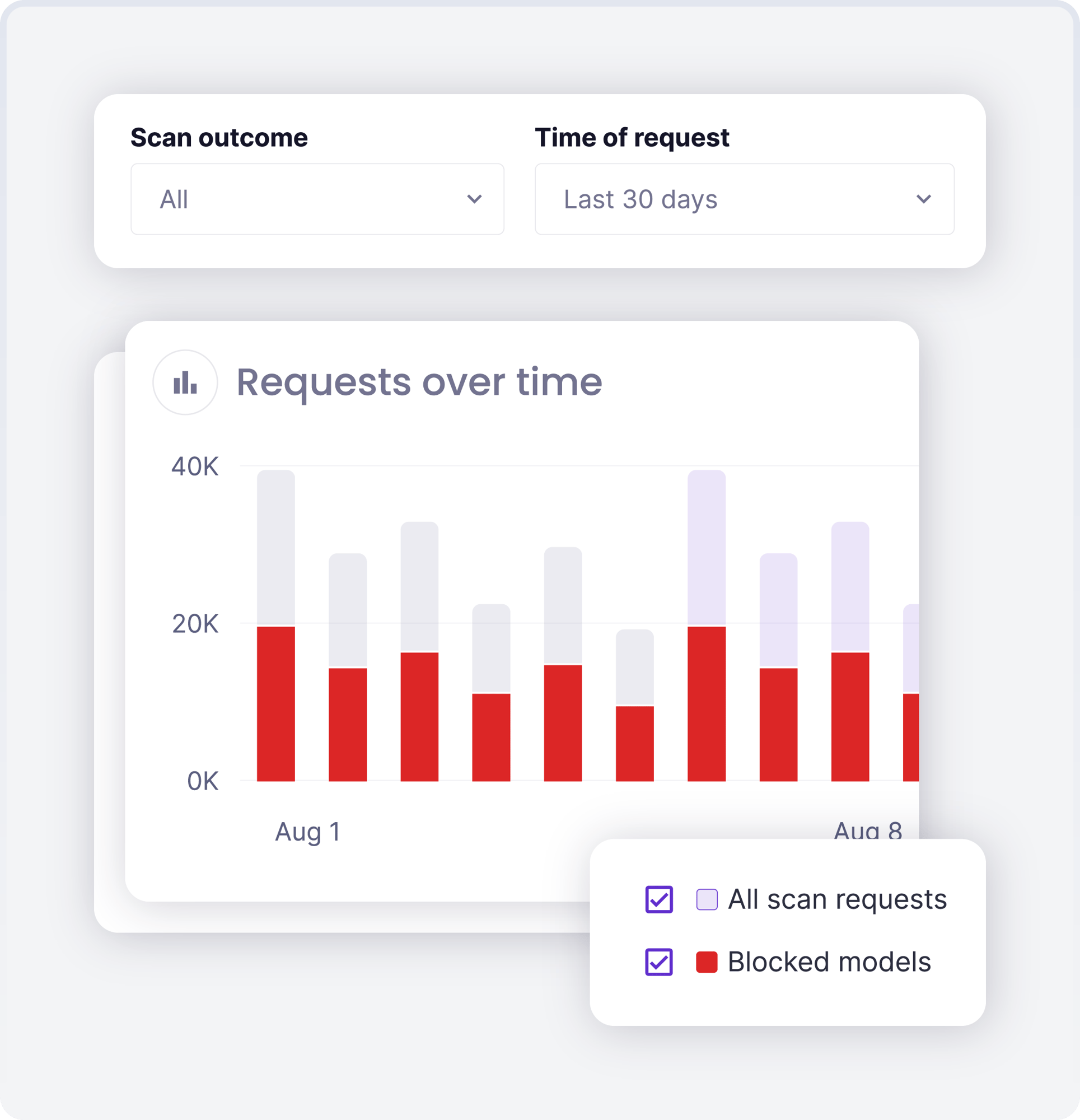

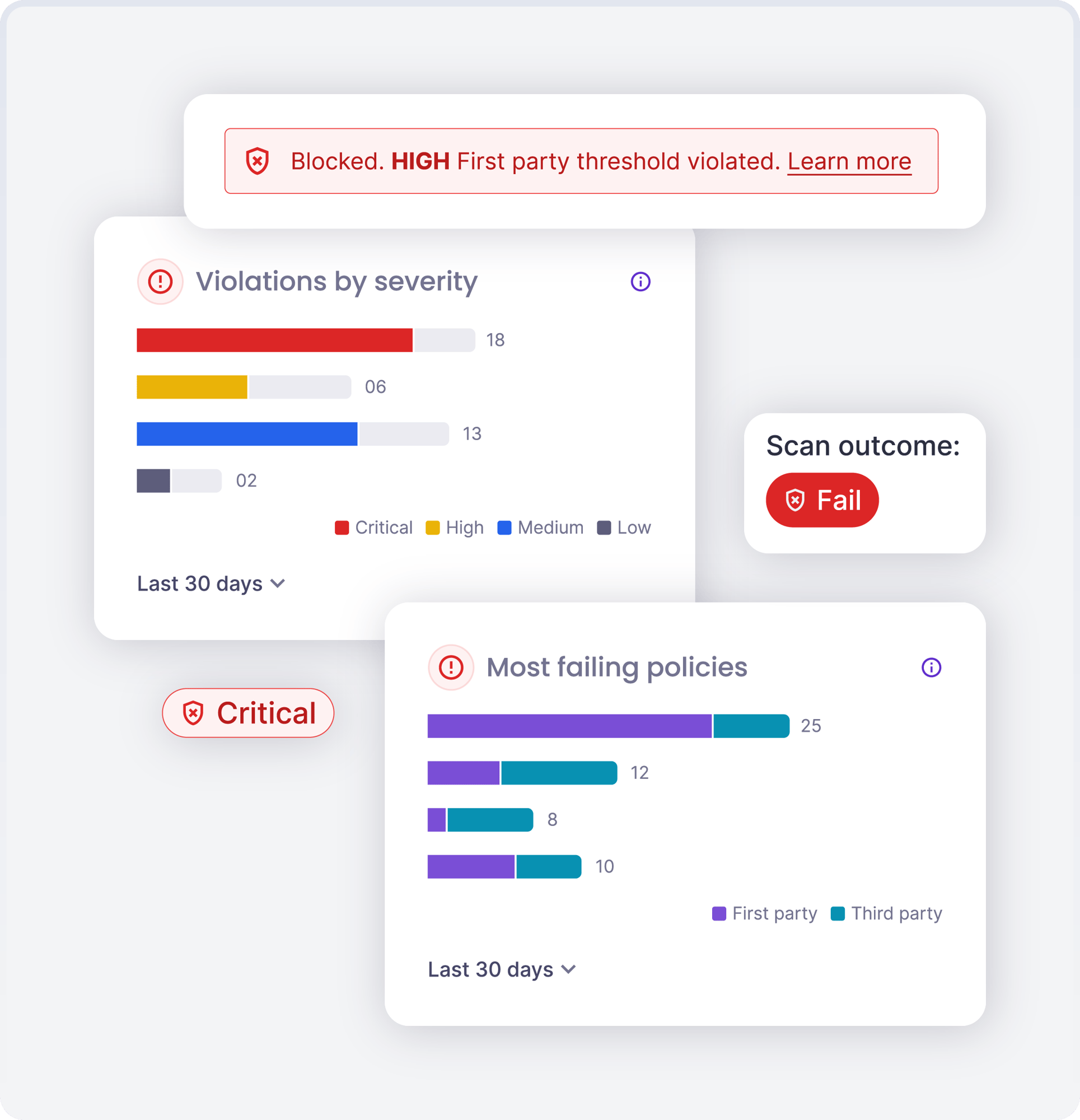

Configurable, Customizable Policies

Guardian gives your teams flexible policies that can be customized for first- and third-party models. Granular security rules for model metadata, approved formats, verified sources, and security findings enable comprehensive governance aligned with your specific security requirements and risk tolerance.

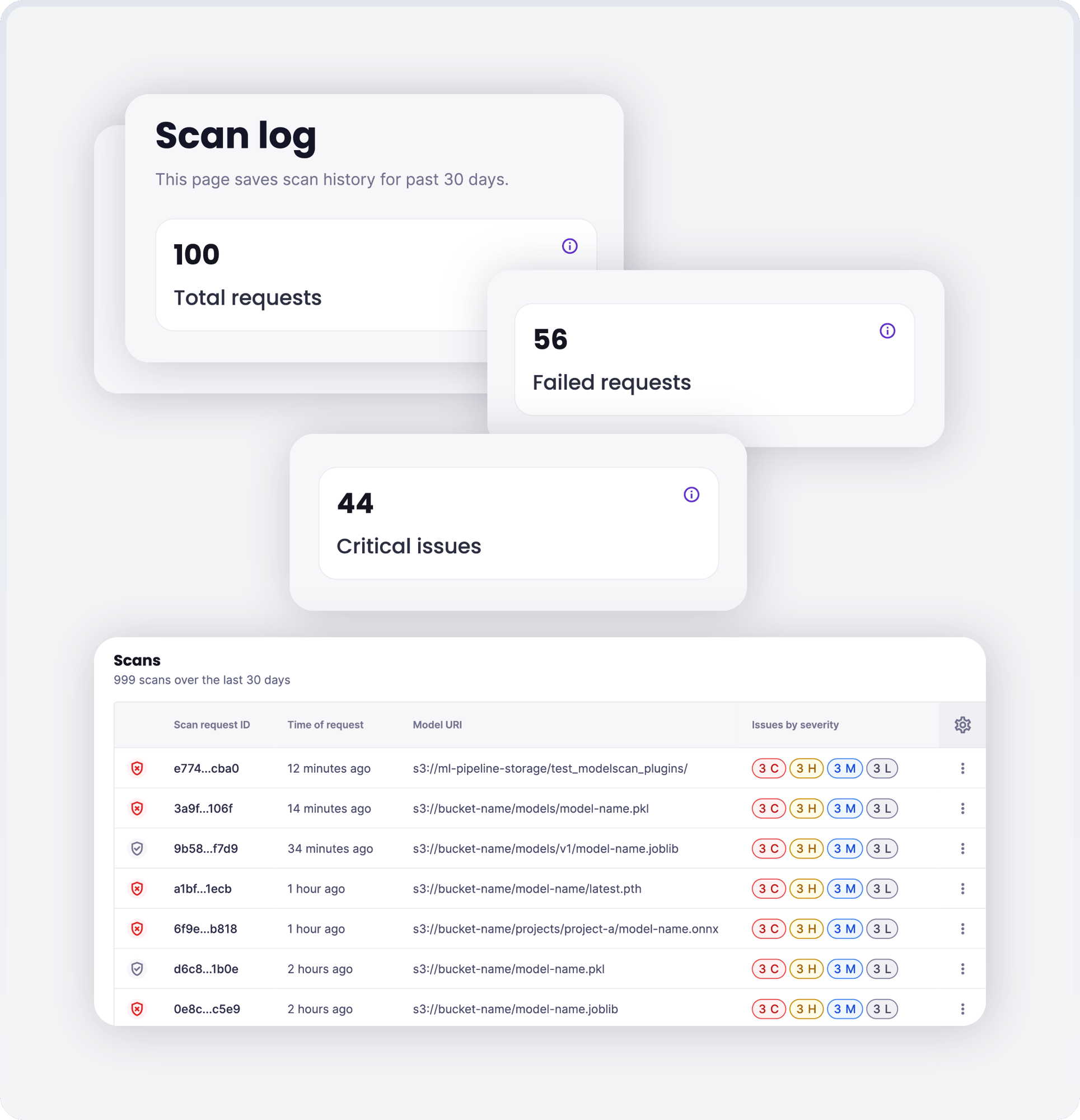

Local Scanning

Run Guardian directly in your CI/CD pipelines as a lightweight Docker container. Scan models from any source including Artifactory, SageMaker Model Registry, and Git repositories. Get immediate security feedback while maintaining a comprehensive audit trail of all evaluations in your centralized Guardian environment.

Composable Security for Modern AI Workflows

Guardian integrates seamlessly into any ML pipeline or DevOps workflow through multiple methods (CLI, SDK, or Local Scanner) and supports diverse model sources like Hugging Face, MLFlow, S3, and SageMaker, adapting to your existing infrastructure without disruption.

Hugging Face Integration

Guardian continuously scans every public model on Hugging Face, over 1.5 million to date, to stay ahead of emerging model risks. This integration enables your team to confidently adopt open source models while maintaining security standards. It also creates a more secure AI ecosystem for community creators and users, democratizing model access without sacrificing protection.

Explore the Latest from Protect AI

4M Models Scanned: Hugging Face + Protect AI Partnership Update

Machine Learning Models: A New Attack Vector for an Old Exploit

Securing Agentic AI: Where MLSecOps Meets DevSecOps

Are You Ready to Start Securing Your AI End-to-End?

Request a demo to see how Guardian enforces model security without hindering innovation.