Secure Your LLM Applications

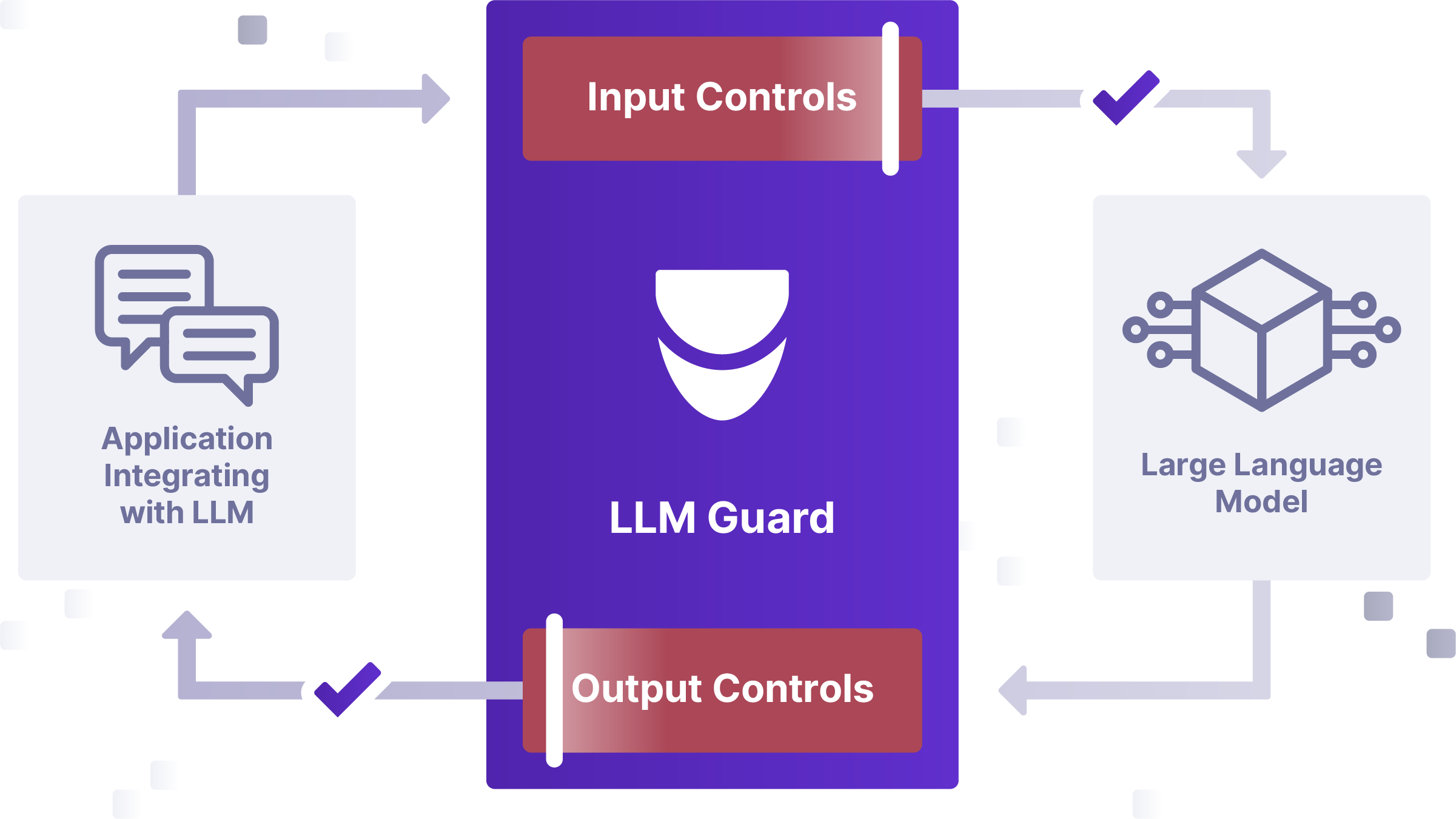

LLM Guard is a suite of tools to protect LLM applications by helping you detect, redact, and sanitize LLM prompts and responses, for real time safety, security and compliance.

LLM Security without compromising cost, speed, and accuracy

Generative AI LLM applications are a key to unlock digital transformation, but can pose significant security risks. Evolving threats include malicious prompt injections to data leakage in RAG (Retrieval-Augmented Generation). It also introduces governance and policy concerns such as the sharing of sensitive data to LLM outputs.

With built-in sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection attacks, LLM-Guard ensures that user interactions with LLMs remain safe and secure.

LLM Security

Advanced input and output scanners Protect your LLM applications from data leakage, prompt injection attacks, and much more.

LLM Model-Agnostic

Deploy on any LLM, including GPT, Llama, Mistral, Falcon and more, and in any LLM framework - from Azure OpenAI, Bedrock, Langchain and beyond.

Easy to Deploy

Integrate within minutes, as a library or API. Includes extensive documentation with use-cases and playbooks to secure your LLM applications quickly.

Securely and safely deploy LLMs

Advanced Input and Output Scanners

LLM Guard includes advanced scanners that provide defense against data leakage, adversarial attacks, and content moderation. They anonymize PII, redact secrets, and counteract threats such as prompt injections and jailbreaks, and can be tailored to specific LLM use cases.

Cost Optimized

LLM Guard is engineered for cost-effective CPU Inference, offering a substantial cost reduction with 5x lower inference expenses on CPU compared to GPU.

Low Latency, High Accuracy

LLM Guard has been downloaded over 2.5 million times, setting the benchmark for speed and accuracy in LLM security. It provides unmatched reliability through advanced Regex analysis and URL reachability, offering the market's most precise LLM security solution.

Open-Source

LLM Guard is a permissively licensed open- source project that allows for rapid innovation, transparency, and is accessible to all. A commercial version with expanded features, capabilities, and integrations within the Protect AI platform is coming soon.