Announcing NB Defense: The Starting Point of ML Security

Introduction

Prior to joining Protect AI as Head of Product, I worked at AWS as a Solutions Architect focusing on their AI and ML (Machine Learning) services, specifically on new services being incubated and their first few years in the wild. Security was limited primarily to: MFA requirements, IAM roles following the principle of least privilege, infrastructure as code for deployments, and encryption for transit and at rest. I never explored the security of my data science work beyond that.

Jupyter Notebooks were always my first step for projects and they helped me build content to educate my peers and customers on how to get the most out of our services. Yet, none of the toolings I used for securing traditional code bases worked for the code inside my notebooks.

In a rush to prove some specific functionality, I might commit the sin of writing some form of authentication token directly into my notebook, installing a package that had not yet made it through security approval for dev work, and perhaps even allowing IAM permissions that were too expansive just to get something to work before spending hours refining it to meet the security standards of AWS.

Any review of notebooks can miss things, even if you are more diligent than I was. Often our stack traces in notebooks can contain sensitive credentials or PII, the libraries that were approved could now be known to contain malicious code or exploits. Given the responsibility we have for the data at our disposal when doing our jobs as data scientists, we need to address the real security concerns that using a tool like Jupyter creates.

Security Problems with Jupyter Notebooks

Jupyter Notebooks are the backbone of many data scientists’ workload, it allows for any code to be quickly written and executed, you can instantly install third-party tools, explore data or models interactively, and even share your work with your peers. Each of those tasks creates a new threat vector for malicious actors and requires effort on everyone’s behalf to help secure it.

In 2021 there were over 40,000 installs of malicious packages from PyPI that were masquerading as official packages [1]. In 2022, 29 official packages were found to contain malicious exploits in their official releases [2]. Once one of these packages is installed in your environment and imported, it may have already executed its payload. It could be used to harvest your data, implement a ransomware attack, or even modify the models you build, in all cases it is difficult to identify the full impact.

Your IT Security group may issue policies for example that would limit a notebook server’s ability to write to certain S3 buckets, or perhaps require MFA to access the notebook server itself, maybe even rejecting models created from notebooks from entering a model registry. Those can be key features to a process for securing your ML pipeline but they ignore what is in your notebooks.

Existing open-source and commercial static code scanning tools do not work on Jupyter Notebooks, they do not understand their file format as code, markup, and shell scripting. This is why we made NB Defense.

What is NB Defense?

NB Defense (Notebook Defense) is a free tool and the first product launch of Protect AI, we are a security firm focused on improving the security of AI and ML workloads for everyone. You use it to scan a single notebook for problems while you are working in Jupyter Lab, receiving the notifications in your workspace much like an IDE guiding you on syntax. NB Defense as a CLI scans your projects looking over all dependencies and notebooks to report over entire repositories or folders.

It empowers you to evaluate your notebooks for issues like leaked credentials, PII disclosure, licensing issues, and vulnerability scanning of your dependencies. The scans can be performed from any local or cloud environment you are using, and can even scan before things enter version control.

With NB Defense you are improving the security posture of your data science practice and helping protect the data and other assets that power your work. Scanning your notebooks like this should become just like locking the doors of your house, a quick common practice that leaves you with better peace of mind.

Securing Your Work with NB Defense

The process for securing your work with NB Defense is:

- Signup for NB Defense

- Install the CLI

- Install the Jupyter Lab Extension(JLE)

- Scan Your Project with NB Defense’s CLI ( Review your Project )

- Review the results and fix issues

- Working with the Jupyter Lab Extension ( Securing as you work )

Signup for NB Defense

NB Defense is our first release and is free to use. When your request is processed we will email you your license keys along with links to our product documentation.

Install the CLI

There are 2 ways to use NB Defense, the CLI offers scans for PII exposure, secrets being leaked, licenses that are not friendly to commercial usage, and vulnerability detection within your dependencies. The JLE operates on a single notebook at a time and checks them for PII exposure or secrets being leaked.

You can install and use both, think of the CLI as the full deep check, and the JLE as a quick way to assess your current work.

To begin, build a virtualenv for NB Defense's CLI, if you are using Conda:

conda create --name nbdscannnerIf you are using venv:

python3 -m venv ./nbdscannnerNext, activate the environent so that it is used when you install packages, for Conda:

conda activate nbdscannnerAlternatively for venv:

source nbdscannner/bin/activateNow install NB Defense with pip, take note to update the value of {LICENSE_KEY} with the one provided to you:

pip install nbdefense --extra-index-url https://license:{LICENSE_KEY}@api.keygen.sh/v1/accounts/protectai/artifacts

Then add the license key as an environment variable:

export PROTECTAI_LIC_KEY={LICENSE_KEY}

After installing you can navigate to your project and scan it:

cd ~/projects/data_science_project

nbdefense scan -r

Install the Jupyter Lab Extension

Similarly to installing the CLI, you complete it with a few shell commands. Once again you will need an activated Python environment. Unlike the CLI, the extension needs to be installed in the same environment that operates Jupyter itself. Open a new terminal and the commands below will guide you through installing and configuring the extension to support scanning from within Jupyter Lab.

Start with activating your main Python environment where you run Jupyter Lab.

Next, install the extension with pip, and once again update your license key:

pip install nbdefense_jupyter --extra-index-url https://license:{LICENSE_KEY}@api.keygen.sh/v1/accounts/protectai/artifacts

Enable the extension:

jupyter server extension enable nbdefense_jupyter

When you run Jupyter Lab now, NB Defense is ready to scan your notebooks.

Scan Your Project with NB Defense’s CLI

Your first scan via the CLI or the JLE will also install a specific version of a language model to enable the detection of PII locally, keeping your information only on your system.

Open your terminal, navigate to your project and scan it with NB Defense:

cd ~/projects/my_data_science_projectnbdefense scan -rIf this is the first time you are running NB Defense, the tool will ask for your permission to install additional tools to look for secrets along with a local language model to power PII detection.

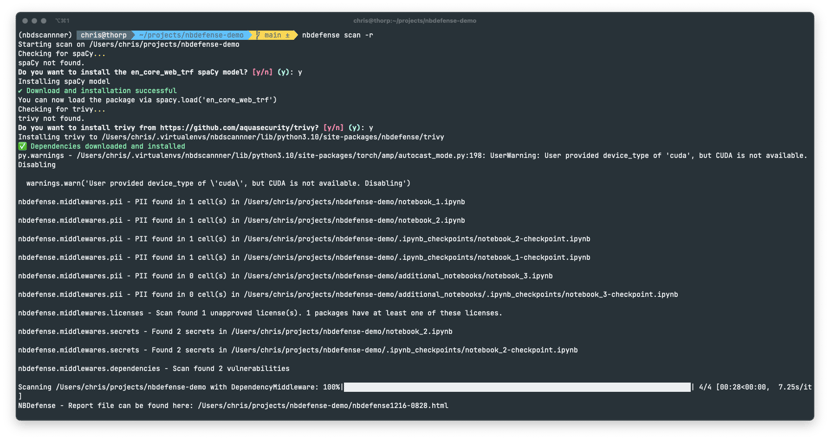

As soon as the extra tools are loaded it will start scanning your notebooks and other content, you can see its progress via standard output, it should look something like this:

Once the command has returned you can view the results in a newly created HTML file, it is named: nbdefense1206-1259.html where the timestamp at the end will correspond to when your scan was completed. Open this file to view your results.

Review the Results

After opening the file with your browser you will see the full list of issues broken down by category for your project. Read over the entire report to learn what issues you need to fix.

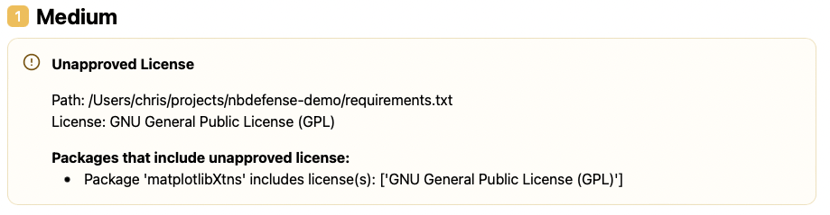

Not all open source licenses are viable for commercial use and putting them in your work could expose your company to fines or even the disclosure of their IP. This check looks for licenses that may not be a good fit for your work, it could look like this:

Noting that the GNU General Public License (GPL) is not viable for your work, the recommendation here would be to remove the dependency of matplotlibXtns from your project.

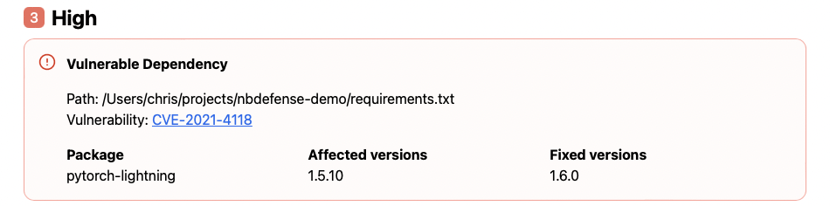

More focusing specifically on security, you may have an alert about a vulnerable dependency:

Your version of pytorch-lightning contains a known CVE and needs to be updated. If you can, update your requirements.txt file to reflect the new version, review your code works, and scan again later to confirm that the dependency is no longer referenced. If this is outside of your control, raise this issue with your relevant colleague to fix it.

You may also be presented with PII or secrets that are found in specific notebooks. There you will see the filename, cell number, and specific input or output that is problematic. Simply edit the files to remove those items if they are indeed security or privacy issues.

Once you have reviewed and corrected your findings, simply scan again to confirm in the next report that the issues were fully mitigated.

NOTE: Do not commit the report files to your repository, they contain indicators of past problems

Scanning a Notebook with the Jupyter Lab Extension

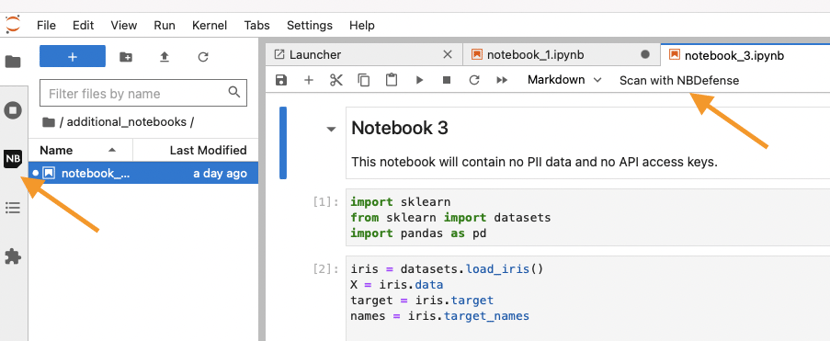

While the CLI is powerful it is not available from the window where you are editing your notebooks, but the extension is. After installing it, you’ll see it as a small icon in your left panel and at the top of every open notebook:

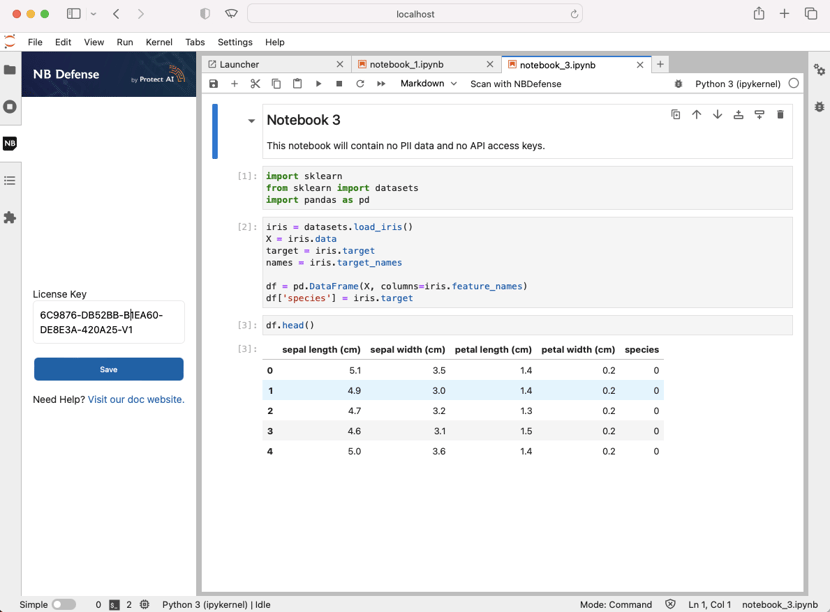

Clicking Scan with NB Defense will open the side panel and prompt you to enter your LICENSE_KEY if you have not, enter your Jupyter Lab Extension key then click Save to continue(it will be saved from then on):

Once your key is accepted you can then click `Scan` to start the scan of your open notebook.

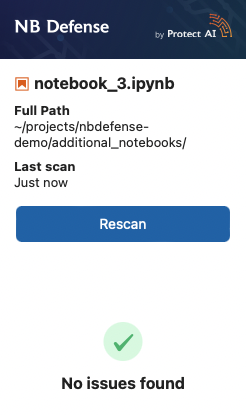

If nothing is found, you’ll see this:

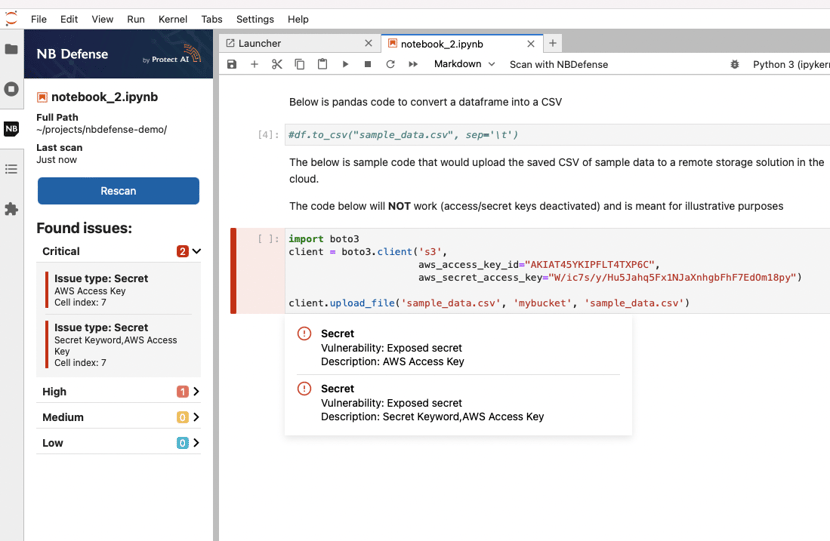

However most of the time issues are found, you’ll be able to see the exact cell location and a hover text guiding you on how to fix it:

The prompts will highlight the problematic areas and after you remove the problematic content from the cells or output, simply scan again to confirm the changes worked.

This was just a sample of what you can do with NB Defense, more content will be coming soon, for example, you can output the reports as JSON and integrate them in your CI/CD pipelines, you can enable the tool as part of a pre-commit hook giving you a fully autonomous check before sharing your work.

What’s Next?

NB Defense is just getting started, in the coming months you’ll be able to store an online archive of your scans to refer to later, allowing you to see a chronological record of the checks on your code and notebooks. Furthermore, you’ll be able to share your findings with others on your team, assign multiple users to specific projects, and configure custom scan configurations for yourself or your entire organization.

Jupyter Notebooks are the start of many data science projects, and they are where we started. There are many more components at play in any ML workload and we’re building out tools that will improve the security posture of each component.

References:

[1] ArsTechnica - Malware downloaded from PyPI 41,000 times was surprisingly stealthy

Find a topic you care about

Get the best of Protect AI Blogs and News delivered to your inbox

Subscribe our newsletter for latest AI news. Let's stay updated!