Protect AI News

Education

Industry News

See, Know, and Manage AI Security Risks

The Protect AI platform provides Application Security and ML teams the visibility and manageability required to keep your ML systems and AI applications secure from unique AI vulnerabilities. Whether your organization is fine tuning an off-the-shelf Generative AI foundational model, or building custom ML models, our platform empowers your entire organization to embrace a security-first approach to AI.

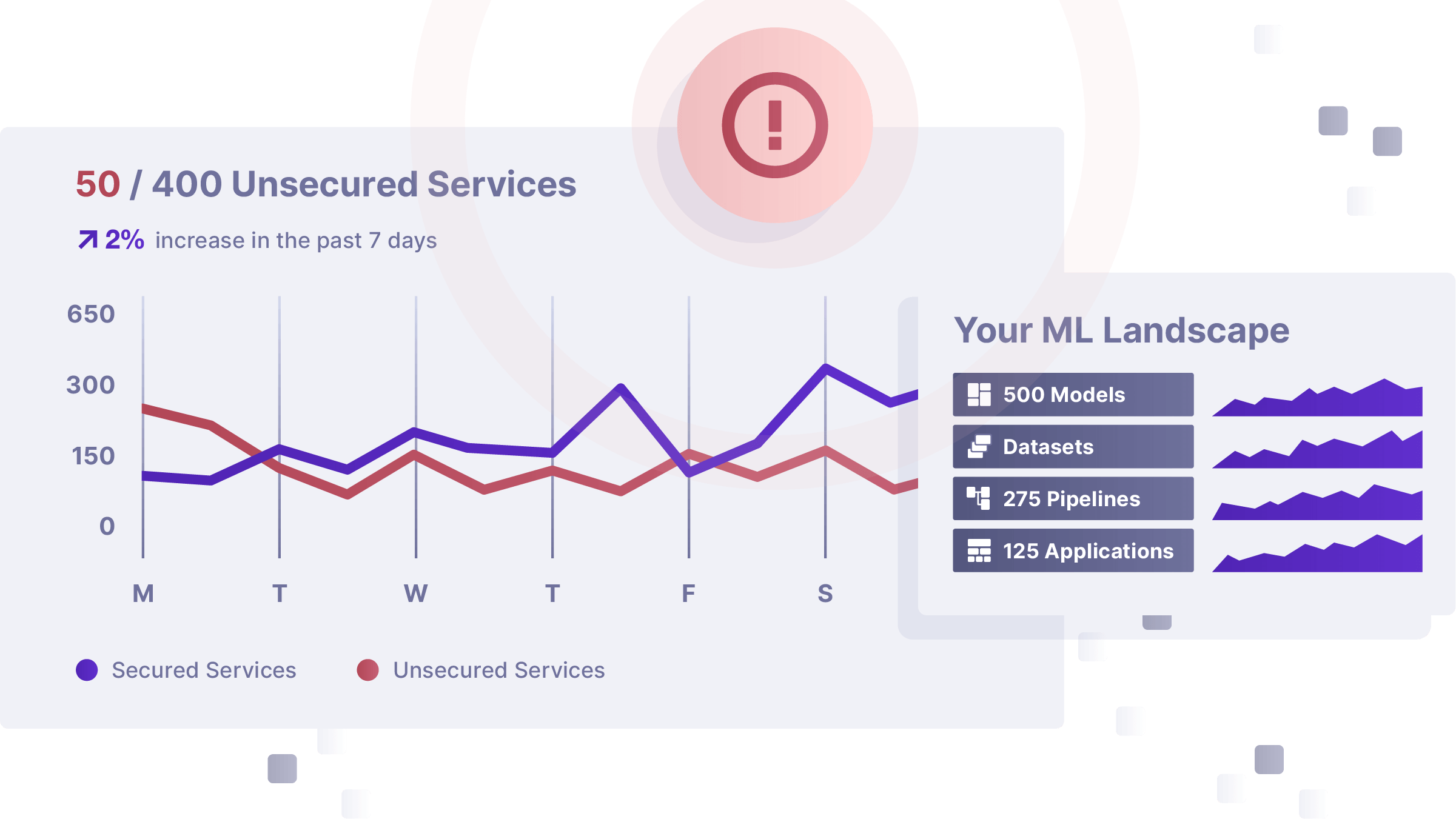

Radar

Understand and Mitigate AI Risk

Radar is the industry’s most advanced offering to secure and manage risk in your AI applications, with visibility and auditability features to ensure your AI remains protected. Its advanced policy engine enables efficient risk management across regulatory, technical, operational and reputational domains. Radar empowers your teams to quickly detect and respond to security threats across the entire AI lifecycle. It is vendor neutral, works across ML vendors/tools, and can be easily deployed in your environment.

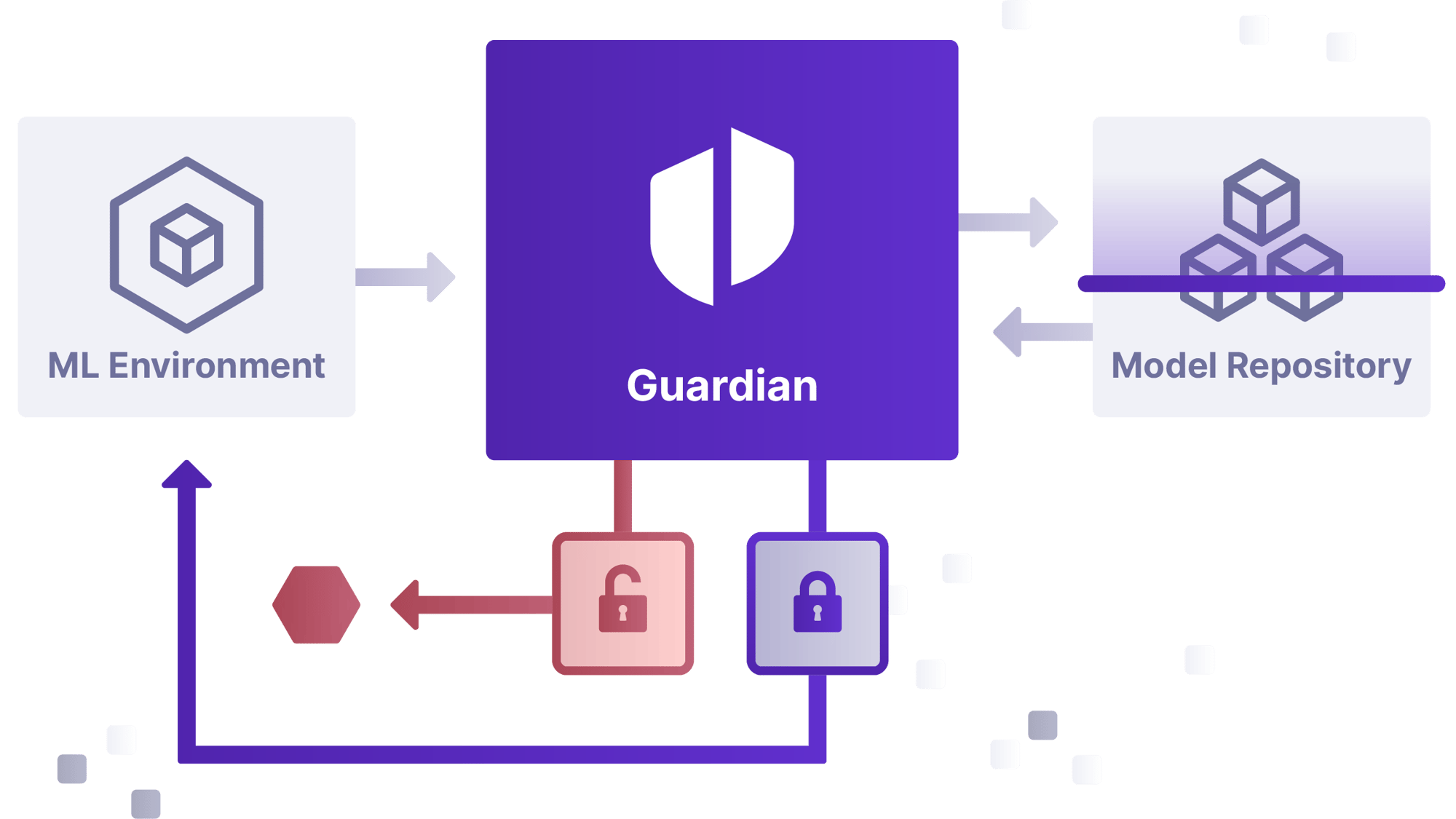

Guardian

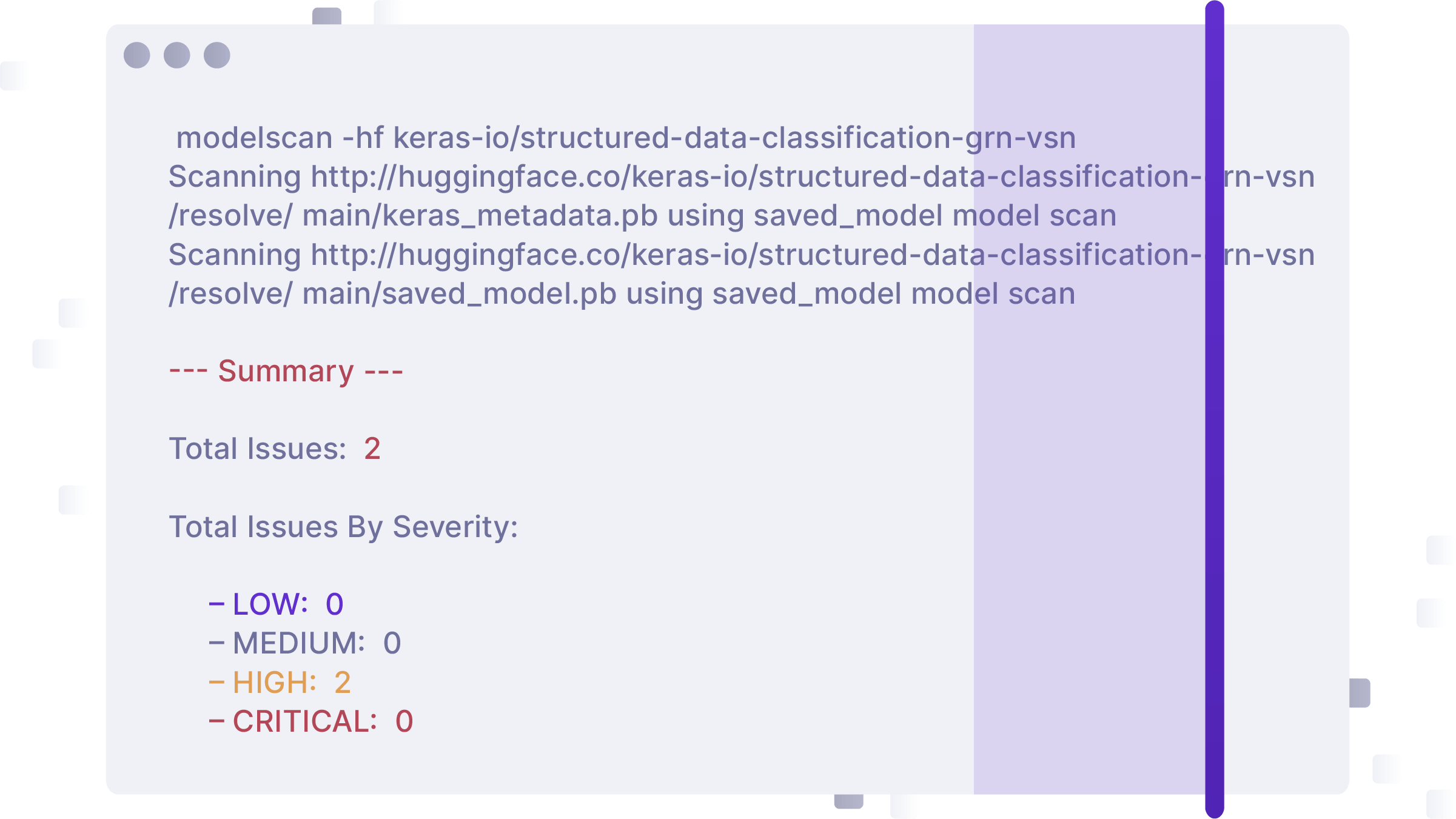

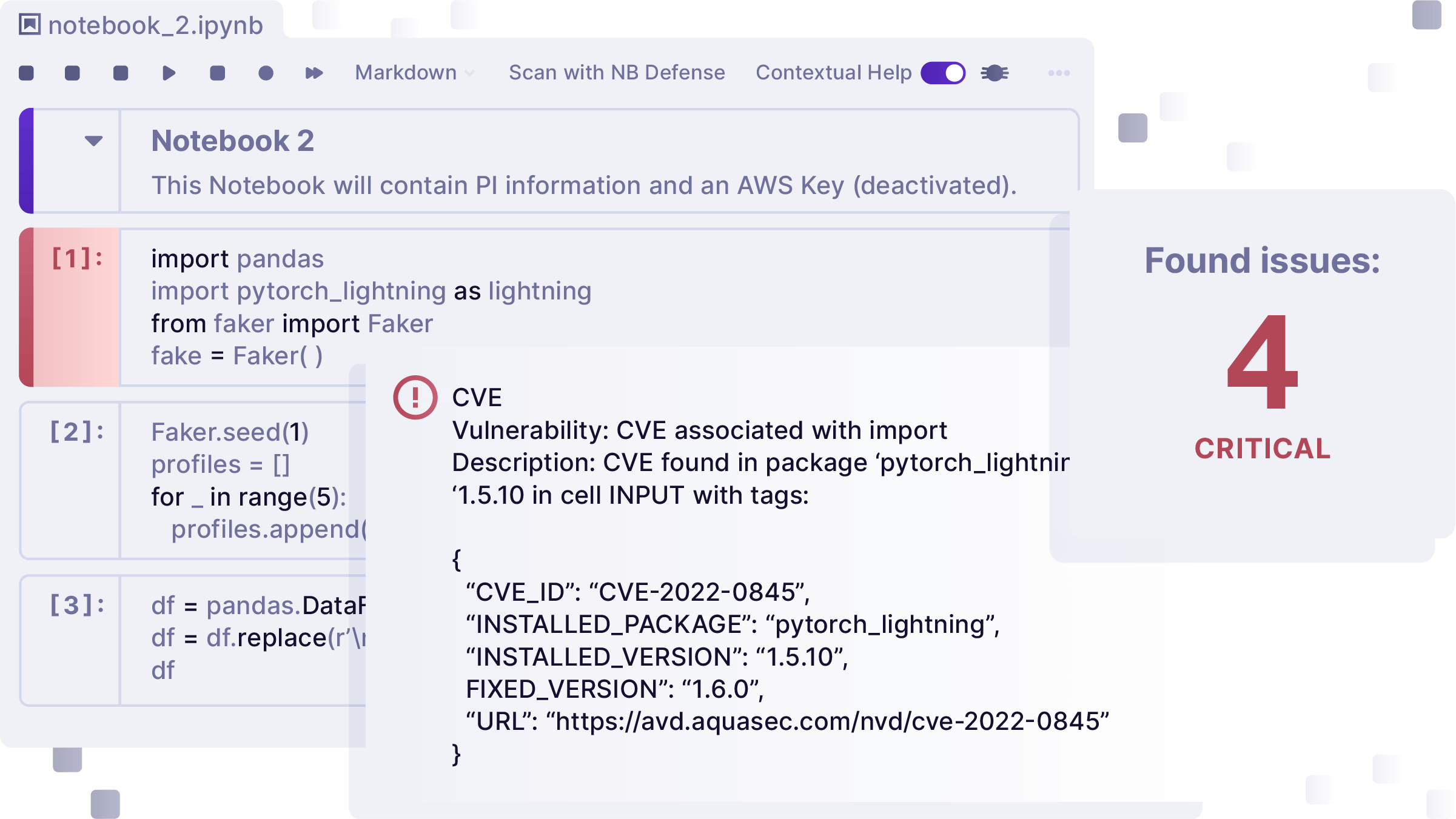

Protect Your ML Models from Malicious Code

Enable enterprise level enforcement and management of model security to block unsafe models from entering your environment. Guardian scans models from public repositories for malicious code, before the model is delivered. This adds a critical layer of security before you use or fine tune ML models, so you can continue AI exploration and innovation with confidence.

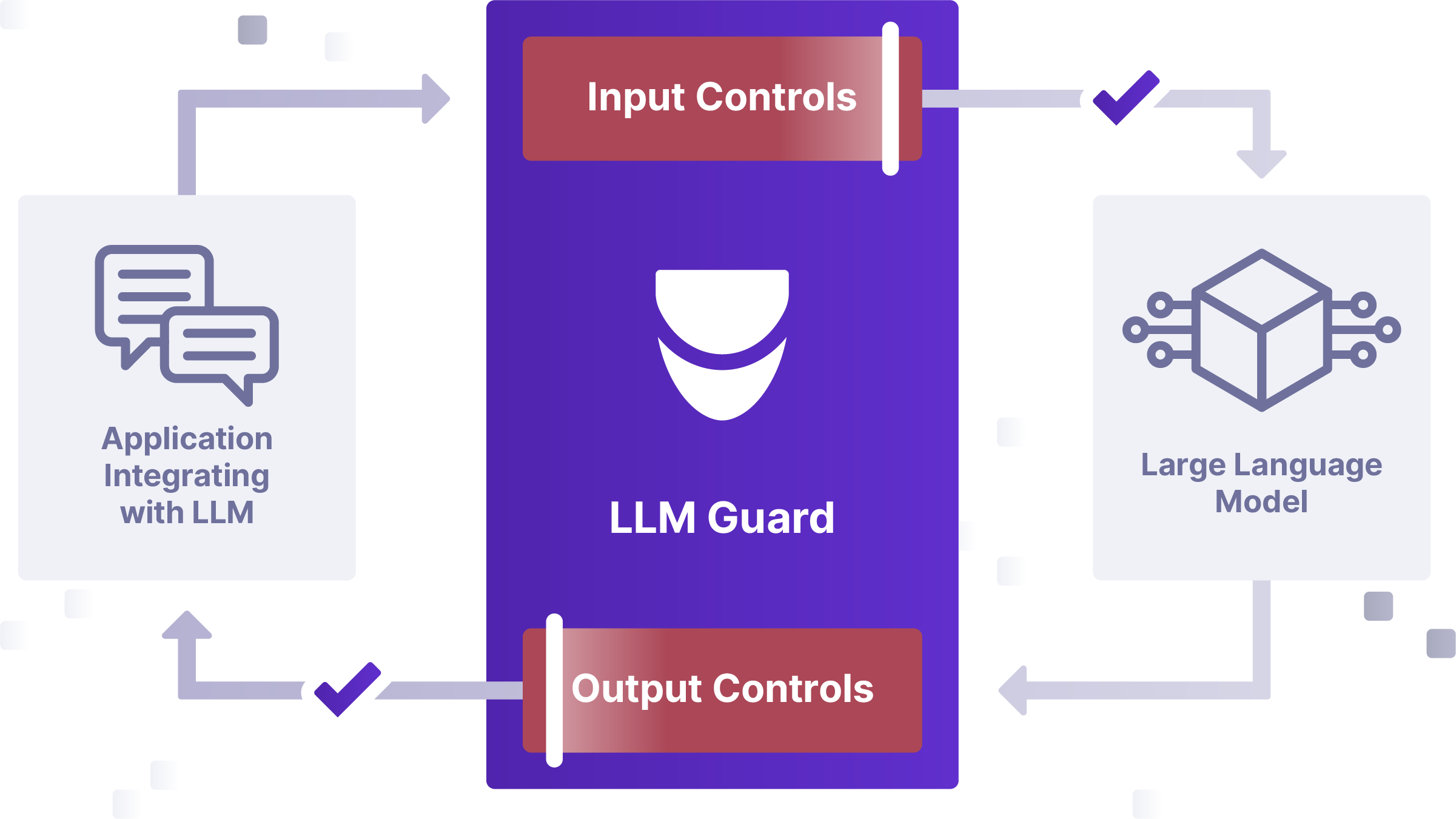

LLM Guard

Secure Your LLM Applications

LLM Guard is a suite of tools to protect LLM applications by helping you detect, redact, and sanitize LLM prompts and responses, for real time safety, security and compliance. With built-in sanitization, detection of harmful language, prevention of data leakage, and resistance against prompt injection attacks, LLM-Guard ensures that user interactions with LLMs remain safe and secure.

Awards

Community

MLSecOps: AI Security Education

Data scientists, ML and AppSec professionals, Regulators, and Business Leaders can learn best practices in MLSecOps, listen to podcasts with thought leaders, and connect with our thriving Slack community.

Huntr: AI Threat Research

The World's first AI Bug Bounty Platform, huntr provides a single place for security researchers to submit vulnerabilities, to ensure the security and stability of AI applications. The Huntr community is the place for you to start your journey into AI threat research.

.png?width=2401&height=1351&name=PAI-HP%20Hero-120623%20(1).png)